The Essential Guide to LLM Observability: Best Practices and Tools

If you’re building LLM-powered apps, there’s one truth you learn fast: these models are brilliant… until they’re not. One minute they’re producing gold, the next they’re confidently spouting something that could get you sued.

That’s where LLM observability comes in. And let me be blunt: this isn’t some optional “nice to have.” This is your insurance policy against performance meltdowns, weird biases, or—worst of all—your LLM making stuff up while smiling about it.

In plain English, LLM observability refers to the ongoing process of monitoring, tracking, and actually understanding what your large language models are doing—both in real-time and over the long haul. That means:

And yes, sometimes it’s about figuring out why your LLM is suddenly responding like a drunk uncle at Thanksgiving.

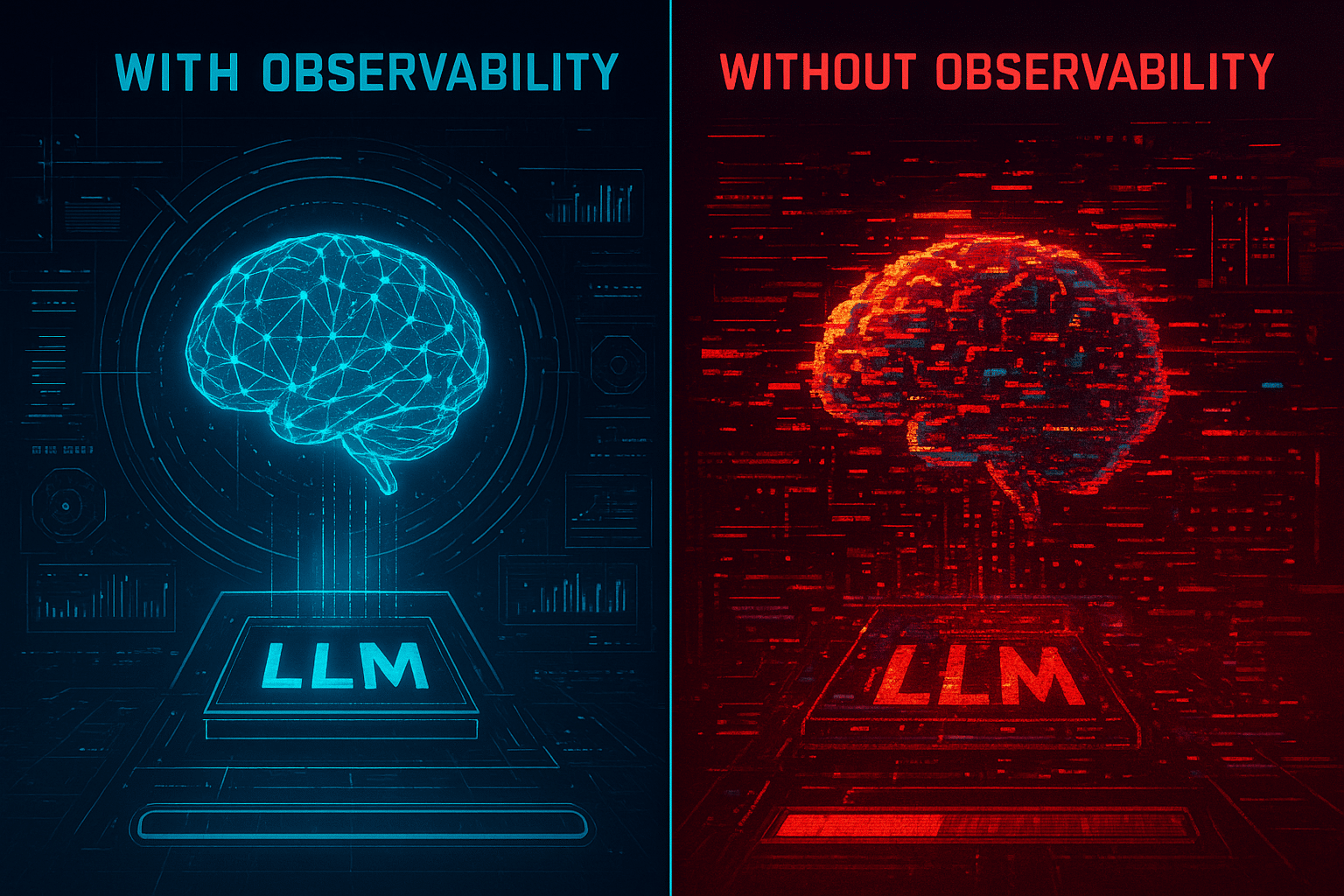

Why LLM Observability is Important (a.k.a. How Not to Get Burned)

I’ve seen it happen: a product team launches an amazing generative AI feature, the metrics look great for two weeks… then complaints start trickling in. Hallucinations. Slow responses. API costs skyrocketing because no one noticed the token usage creeping up.

Without a good observability solution, you’re basically flying blind. You might think your model is fine—but under the hood, the LLM chain could be calling external APIs five times for every query, your prompts might be overfetching data from the data source, or the LLM response quality could be tanking after a silent update from your LLM provider.

Side Note: And yes, LLM providers tweak their models without telling you. If you’re not monitoring, you won’t even notice until your users do.

Benefits of LLM Observability

Understanding LLM Components (So You Know What to Watch)

Every LLM-based application has moving parts:

Here’s the kicker—any one of these can introduce performance bottlenecks or cause bad outputs. You can’t just watch the final answer; you’ve got to see the whole journey.

That’s why I like framework-agnostic observability tools—they don’t care whether you’re using LangChain, custom Python scripts, or a dusty Java backend. They just track.

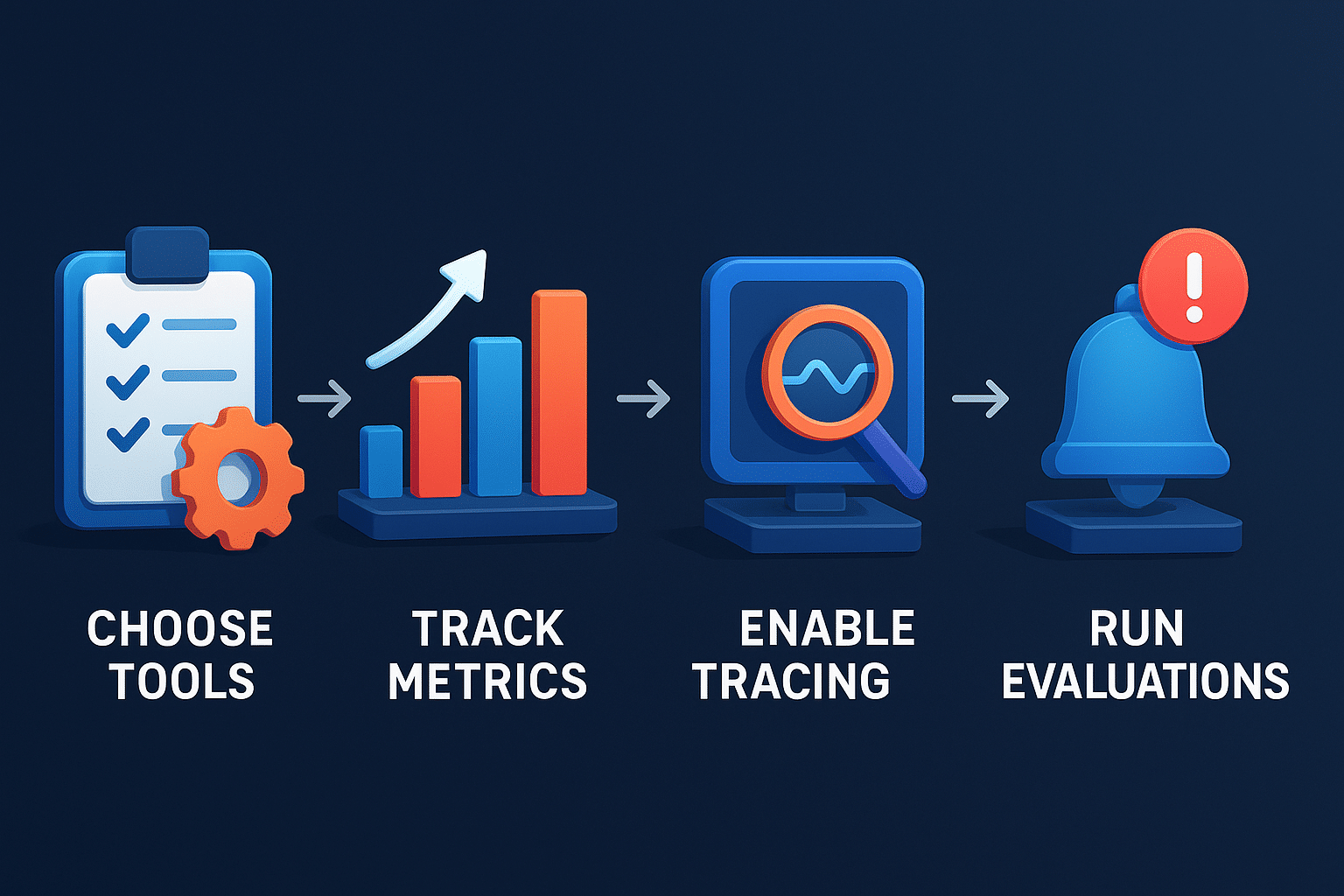

Implementing LLM Observability (Without Losing Your Mind)

Here’s the process I use with clients when implementing LLM observability:

- Pick the right observability tools (more on that later).

- Track key metrics: response time, token usage, API failures, evaluation metrics, etc.

- Enable end-to-end traces so you can see the flow from user request → LLM call → external API calls → final output.

- Run automated evaluations on model performance—don’t rely on your gut alone.

- Use human evaluators for subjective metrics like tone or safety.

- Alerting & Root Cause Analysis—when something breaks, you need instant notice and breadcrumbs to follow.

Side Note: If you’re not tracking prompt injections, you’re begging for trouble. A single malicious input can derail your LLM-powered app faster than a bad regex.

Key Metrics for LLM Monitoring

Let’s get specific. A comprehensive observability setup should track at least:

- Response Quality (scored automatically + by humans)

- Response Time (because users hate waiting)

- Token Usage (cost management, baby)

- Model Behavior shifts (did the tone or accuracy suddenly change?)

- Performance Bottlenecks (latency spikes, slow external APIs)

- Error Tracking (failed calls, unexpected outputs)

- User Interactions (are they re-asking questions a lot?)

- API Usage & Rate Limits

- Sensitive Data Exposure risks

And yes, you can get nerdy with prompt template tracking and retrieval-augmented generation diagnostics.

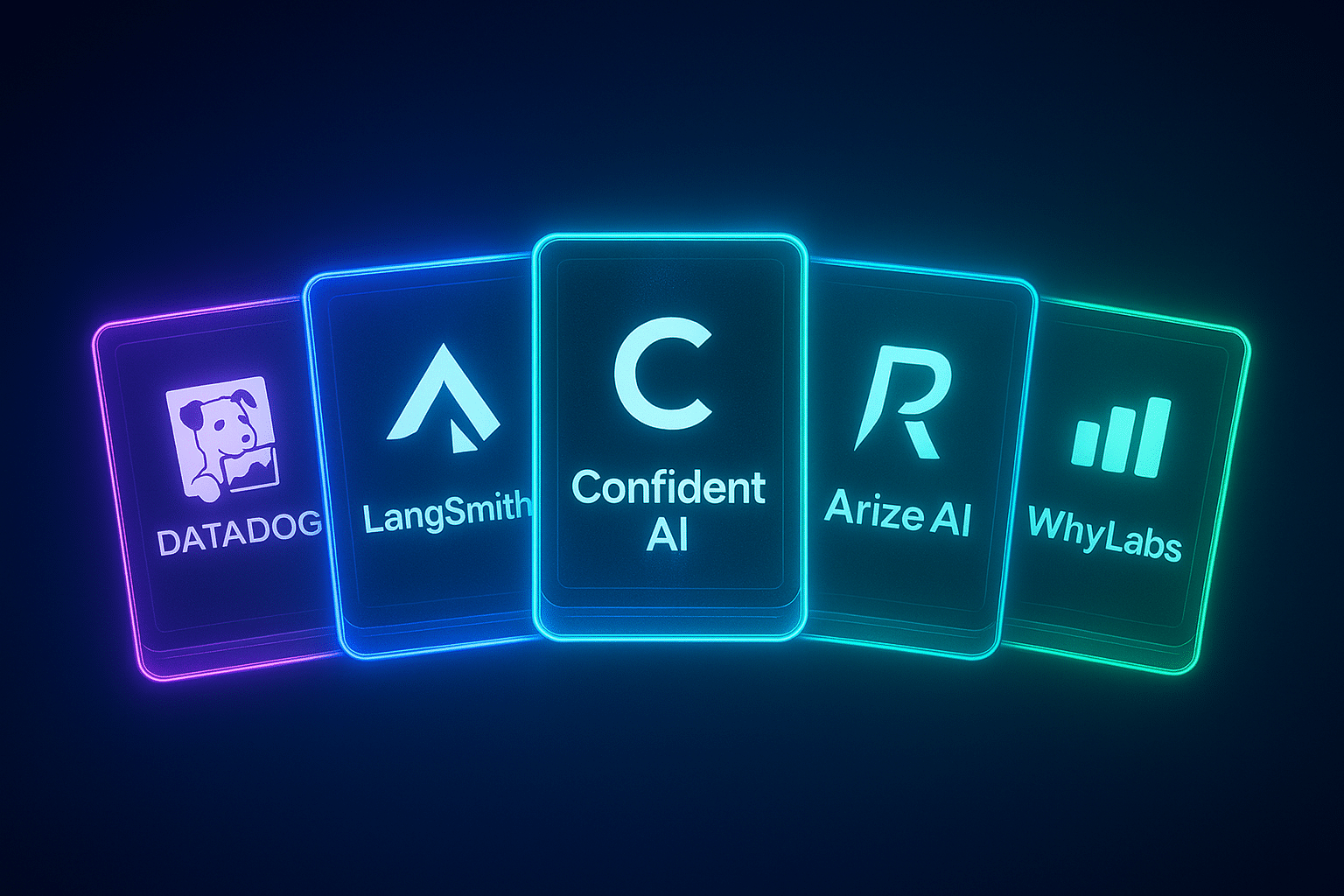

LLM Observability Tools You Should Know

Here’s where it gets fun—let’s talk actual tools.

Datadog LLM Observability

Think of Datadog as the all-seeing eye of LLM performance. It’s not cheap, but if you’re running enterprise-grade LLM apps, the end-to-end traces and API failure tracking are worth it.

🔗 Datadog LLM Observability

LangSmith

For the LangChain crowd. Lets you trace LLM calls, see every LLM input/output, and track performance metrics.

🔗 LangSmith

Confident AI

Focuses on automated evaluations and human-in-the-loop scoring for response quality. Great for safety-critical AI applications.

🔗 Confident AI

Open-Source LLM Observability Tools

If budgets are tight, check out Arize AI or WhyLabs—both have solid free tiers and can integrate with your LLM monitoring pipeline.

My Go-To Best Practices

After years of watching LLMs misbehave, here’s what I stick to:

Future of LLM Observability

With LLMs getting more complex (multi-agent systems, anyone?), LLM observability important isn’t going away—it’s becoming a survival skill. Expect more tools offering:

- Real-time anomaly detection on model outputs.

- Integrated cost management dashboards.

- Safety scoring for every LLM response.

- Advanced retrieval monitoring for RAG pipelines.

Side Note: I wouldn’t be surprised if in two years, not having LLM monitoring is considered negligent—like shipping a web app without HTTPS.

Conclusion and Next Steps

Here’s the deal—LLM observability isn’t some fancy buzzword you throw in a pitch deck. It’s the seatbelt, the airbags, and the dashboard warning lights for your LLM-powered apps. Without it, you’re just hoping your large language models behave, and hope is not a monitoring strategy.

A good observability solution gives you full visibility into model behavior, tracks the key metrics that matter (from token usage to response quality), and lets you do root cause analysis before small hiccups become public disasters.

Whether you’re using Datadog LLM Observability for enterprise-scale tracing, tinkering with LangSmith for developer-friendly insights, or rolling your own open-source LLM observability setup, the principles stay the same: monitor continuously, analyze ruthlessly, and never ignore a weird spike in your metrics.

Because at the end of the day, the difference between a smooth, scalable LLM-based application and a PR nightmare isn’t the size of your model—it’s how well you’re watching it.

And trust me… your future self will thank you for setting this up before your next API bill gives you heart palpitations.