Boost Your LLM Performance with Datadog LLM Observability Insights

Datadog LLM Observability — Why You Should Care

Let’s be real. Running LLM-powered apps in production isn’t all rainbows and unicorns. Models hallucinate, prompts break, latency spikes at the worst times, and suddenly your “AI-powered customer support agent” is spitting out gibberish that sounds like it was written by a caffeinated intern.

That’s where Datadog LLM Observability comes in. It’s not just another monitoring tool—it’s like strapping a black box recorder, a performance coach, and a lie detector onto your LLM stack.

Observability isn’t optional anymore. If your LLM apps are serving real users, you need a way to:

Side note: If you’re in SaaS, e-commerce, or literally any business putting LLMs in production, this is the difference between “AI magic” and “AI meltdown.”

What Is LLM Observability Anyway?

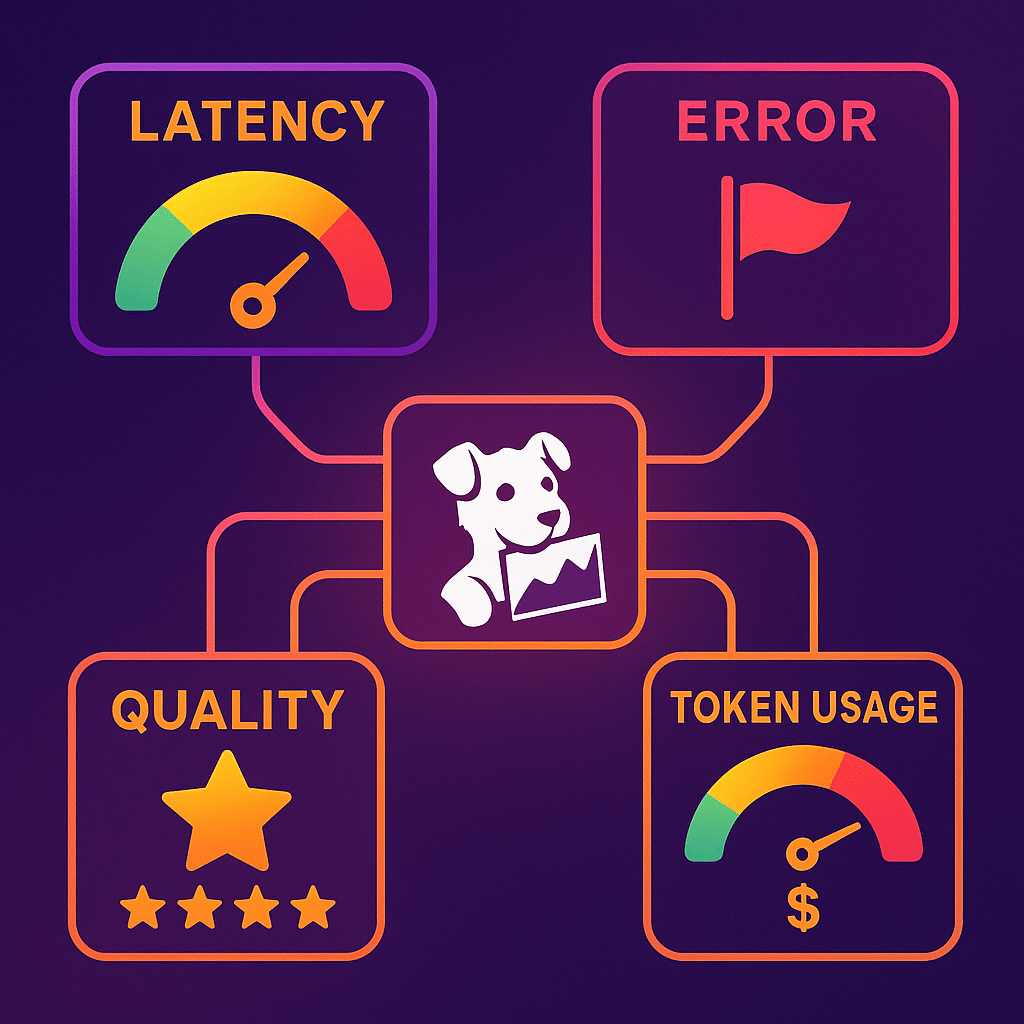

At its core, LLM observability is about visibility. You want to know:

- What went in (input).

- What came out (output).

- How long it took (latency metrics).

- Whether it broke something (errors).

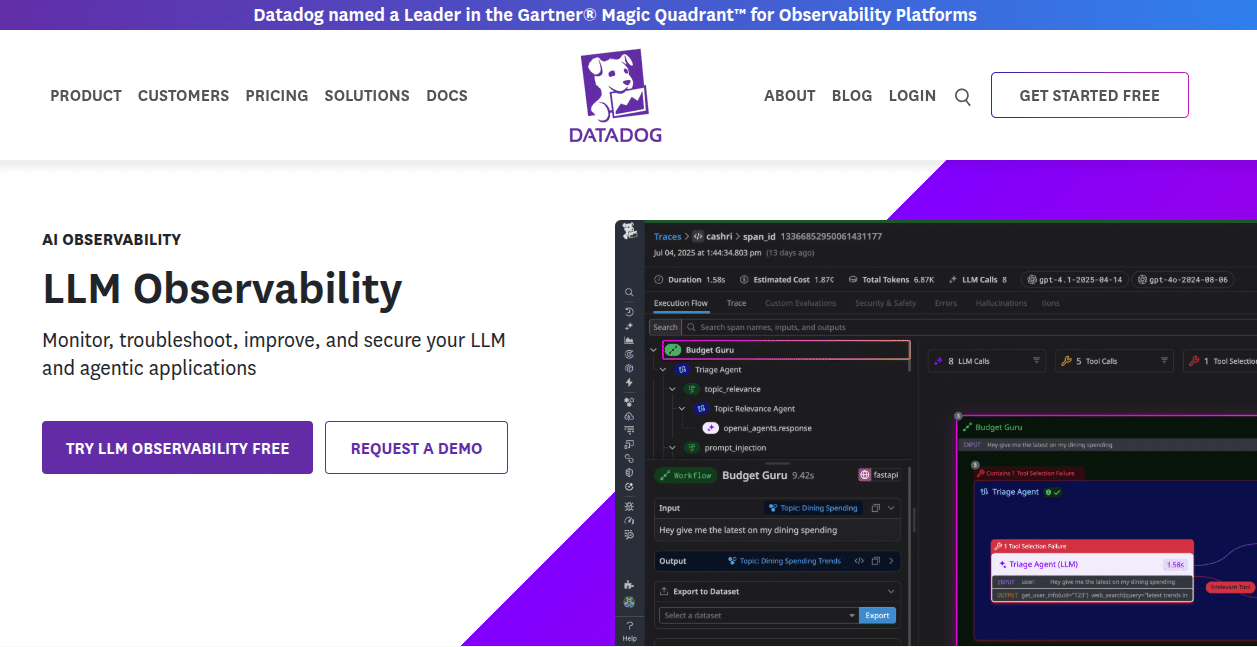

Datadog takes this one step further with LLM traces—full-blown workflows that show you exactly how your app, prompts, tools, and models behave in real time.

Think of it like an MRI for your AI app’s brain.

📌 Check it out: Datadog’s official LLM Observability docs

LLM Applications: The Good, The Bad, The Ugly

LLM applications are everywhere now: chatbots, summarizers, RAG pipelines, recommendation engines, you name it. But here’s the thing nobody tells you at the demo stage:

Without observability, you’re flying blind. And when production breaks, it’s a nightmare trying to figure out whether it was the model, the agent configuration, or that one “sophisticated prompting technique” someone added on a Friday.

Side rant: Prompt engineering is cool and all, but let’s be honest—half of it is glorified trial and error. Without observability, you’re basically hoping your prompts don’t blow up in front of users.

Datadog LLM: What It Brings to the Table

So why Datadog? Because it already does application performance monitoring (APM) like a champ, and now it’s extending that muscle into LLMs.

Here’s what you get:

And the magic sauce? You can correlate LLM performance with infrastructure issues. So if your RAG agent is choking because your database is lagging, Datadog shows you the chain reaction.

That’s a game changer.

The LLM Agent Factor

Agents are where things get spicy. They’re calling tools, chaining prompts, juggling APIs—and when they go rogue, debugging feels like chasing a squirrel through traffic.

Datadog lets you:

It’s basically therapy for your LLM agents.

Monitoring & Troubleshooting: No More Guesswork

Troubleshooting LLM apps used to feel like detective work without the fun hat. But with Datadog:

- You see traces of every request, response, and error.

- You can investigate root causes (is it the model, the prompt, the infra?).

- You can monitor operational performance in real time.

Example: Let’s say your app suddenly starts producing irrelevant answers. Instead of guessing whether it’s the retrieval step, the LLM, or your caching layer—you can literally follow the trace and pinpoint the culprit.

Side note: This is the difference between a 2-hour fix and a 2-week fire drill.

Operational Performance: Beyond Just Metrics

Here’s what matters most in production:

Datadog lets you tie all these together. You’re not just looking at “latency = 300ms.” You’re seeing why latency is 300ms—maybe it’s a tool call, maybe it’s a bigger model being invoked, maybe it’s just bad config.

And the kicker: Datadog helps you reduce downtime. That’s not just observability—it’s business impact.

Best Practices (a.k.a. Things You’ll Thank Yourself For Later)

If you’re rolling out Datadog LLM Observability, here’s what I’d recommend:

- Start with clear goals. Don’t just “monitor everything.” Decide what matters: latency? cost? quality?

- Configure your agents properly. Capture input, output, and tool usage. Future you will thank present you.

- Integrate with existing APM. LLM monitoring isn’t separate—it’s part of your stack.

- Review traces weekly. Don’t wait for users to report issues. Proactive beats reactive.

- Experiment with prompts—but measure results. If a “sophisticated prompting technique” actually makes latency worse, you’ll know.

Why This Matters for Companies (Not Just Devs)

This isn’t just a dev thing. For companies, LLM observability = safety + reliability + savings.

And let’s be honest—your customers don’t care about how cool your prompts are. They care about fast, accurate, useful responses. That’s what observability helps guarantee.

Conclusion: Stop Flying Blind

Here’s the bottom line: LLM observability is not optional anymore.

Datadog gives you a unified observability platform that doesn’t just monitor infra and apps—it now covers your LLMs too. From traces to token usage, from agent debugging to operational performance, it’s the kind of visibility that keeps your AI projects from imploding at scale.

If you’re building LLM-based applications—whether you’re a SaaS founder, an enterprise CTO, or that poor dev who got voluntold to “add AI to the product”—you need this.

👉 Try Datadog’s LLM Observability and see what’s really going on inside your AI apps.

Side note to wrap it up: Because let’s be real—LLMs are amazing, but without observability, they’re basically expensive guess machines. And guesswork doesn’t scale.