What Are LLM: Understanding Large Language Models and Their Impact

So… what are LLM anyway?

Let’s be real—everybody’s throwing around the term “LLM” like it’s candy at a parade. But ask most people what it actually means? You’ll probably get a blank stare, or worse, “Uhh… it’s like ChatGPT, right?”

Yes… but also no.

LLM stands for Large Language Model. And these things are not just your friendly chatbot—they’re the engines under the hood of the entire generative AI wave. Think of them as statistical jugglers of human language. They eat training data by the terabyte, crunch patterns using neural networks, and spit out text that sounds like it came straight from a human brain (sometimes smarter, sometimes hilariously dumb).

If you’ve ever asked ChatGPT to write code, translate Spanish slang, or even crank out a bad haiku, that’s an LLM at work.

👉 Side note: If you’re in SaaS, SEO, or marketing, understanding LLMs isn’t just nerd trivia—it’s career insurance. These models are rewriting workflows, content pipelines, and even customer support. Ignore them, and you’ll sound like someone who still says “the Facebook.”

What Are LLM (For Real)

At their core, large language models (LLMs) are a type of artificial intelligence designed to process, understand, and generate human language. They do this through deep learning techniques—specifically transformer-based neural networks.

They’re trained on vast datasets (think: books, research papers, websites, Wikipedia rabbit holes, Reddit arguments—you name it) so they can “learn” the patterns of written language.

Instead of memorizing every sentence (which would be impossible), they use probability to guess the next word in a sequence. That’s why you’ll often hear LLMs described as statistical language models.

Is that groundbreaking? Yes. Does it also sound like a glorified autocomplete? Also yes. But don’t be fooled—autocomplete never wrote functioning Python scripts or summarized 100 pages of proprietary data in three seconds.

👉 For the nerds: If you want a more technical dive, OpenAI has a good primer on how language models work.

How Large Language Models Work (Without Melting Your Brain)

Okay, buckle up. Here’s the “explain it like I’m five” version:

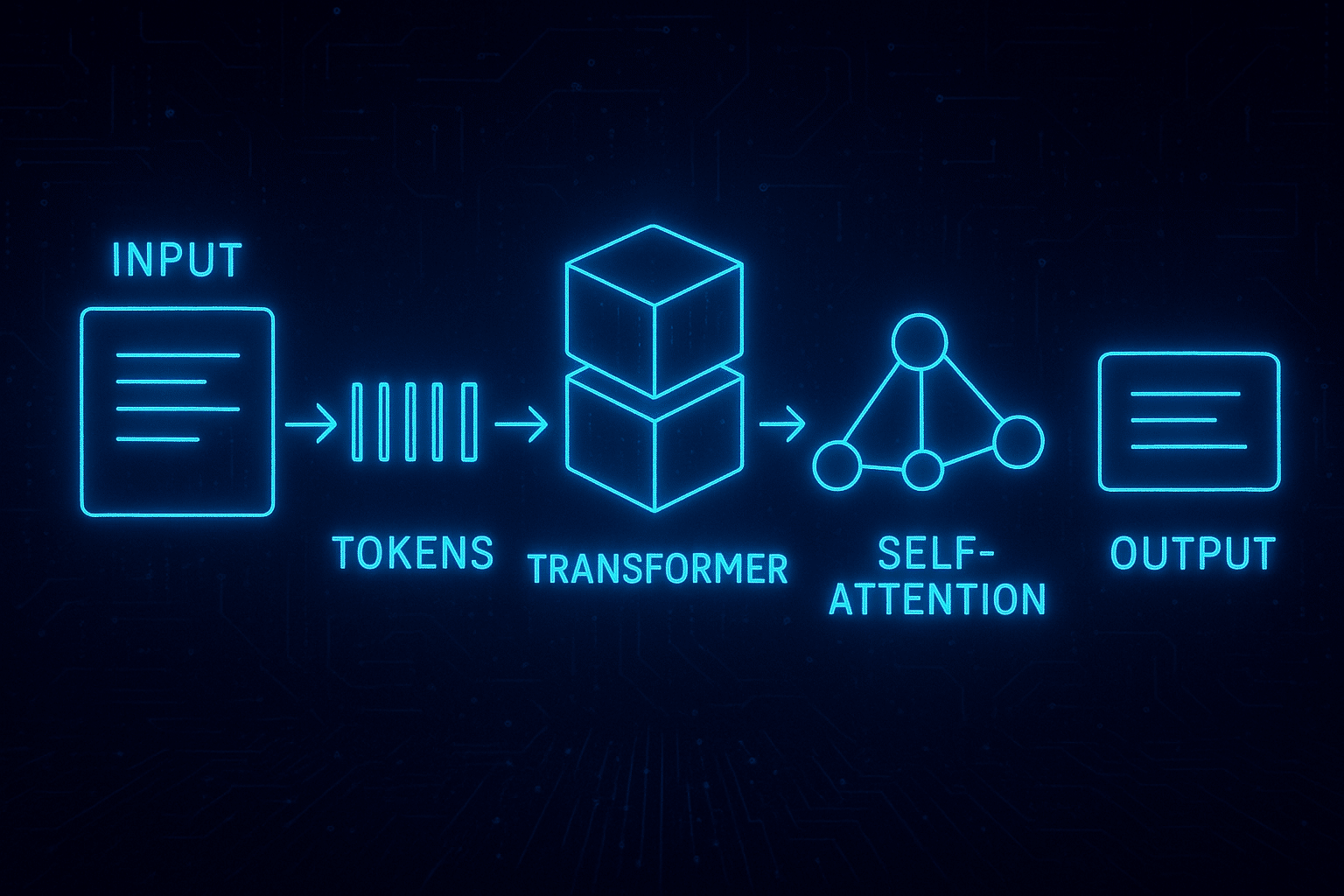

Input Data → You type in something (a question, a prompt, a random request).

Tokenization → The model chops your text into smaller units called tokens. A token could be a word, a syllable, or even part of a word.

Transformer Model → This is the basic architecture. Transformers are great at handling sequential data (aka text).

Self-Attention Mechanism → Fancy way of saying: the model figures out which words in your sentence matter most in relation to each other. (“Bank” in “river bank” doesn’t equal “bank” in “money bank.”)

Prediction → The model calculates probabilities of what word (token) should come next.

Output → Voilà. You get human-like language, code, or sometimes, utter nonsense.

👉 Side note: Sometimes the nonsense is the best part. I once asked an LLM to write a poem about SEO audits, and it gave me a Shakespearean tragedy about backlinks. 10/10, would read again.

Language Models vs. Large Language Models

Quick distinction: language models aren’t new. We’ve had smaller statistical models for decades (think early spam filters, predictive text on your Nokia 3310).

What makes large language models special is—well—the large part. Instead of training on a few million sentences, they train on billions. Instead of a small neural net, they use massive deep learning architectures with billions (sometimes trillions) of parameters.

It’s like the difference between a kid who’s read 5 books and a kid who’s read every library on Earth. Both know how to read. One just has more material to draw from.

Fine-Tuning: How LLMs Learn Your Weird Requests

Here’s the deal: training a model from scratch is ridiculously expensive. We’re talking millions of dollars in computational resources (hi, GPUs and TPUs).

So instead of starting fresh, companies fine-tune existing foundation models for specific tasks:

Sometimes they even use reinforcement learning from human feedback (RLHF)—basically, humans telling the model, “Nope, that’s wrong. Try again.”

👉 Related Read: Hugging Face has a solid explainer on fine-tuning language models.

Generate Text: Why Everyone’s Obsessed

Let’s be honest—text generation is the party trick that made LLMs famous. Need a blog post? Done. Need a product description that sounds human? Easy. Need a breakup text? …awkward, but possible.

But it’s not just content marketing fluff:

👉 Side note: I’ve personally seen a junior dev get outperformed by GPT-4 in writing boilerplate code. Brutal, but also a wake-up call.

Artificial Intelligence + Foundation Models

LLMs are foundation models. They’re not built for one single task—they’re trained on enough data to adapt to lots of tasks.

This flexibility is why they’re embedded across AI systems—from virtual assistants like Siri to enterprise-level AI tools like Microsoft Copilot.

The big catch? With great power comes… great hallucinations.

Yep, LLMs sometimes just make stuff up. They’ll confidently tell you “Abraham Lincoln invented WiFi.” That’s why human feedback and governance are critical.

Generative AI: LLMs’ Coolest Party Trick

Generative AI is the umbrella. LLMs are the engine. Together, they’re making stuff that feels… well, human.

Applications?

👉 Example: Anthropic’s Claude can handle massive documents and answer queries in plain human language.

Code Generation: The Sleeper Hit

Let’s talk devs. LLMs aren’t just “fluffy content generators”—they’re eating into programming languages now.

From autocomplete for Python to full-blown debugging in JavaScript, these models can literally write code. Are they perfect? No. But they’re a damn good co-pilot.

Pro tip: treat LLMs like a “junior dev who works fast but makes mistakes.” Double-check their work, but let them handle the grunt stuff.

Deep Learning & The Attention Mechanism

The secret sauce is the transformer architecture and its attention mechanism.

Old models processed text word by word. Transformers? They look at all the words in a sentence at once and decide which ones actually matter. That’s why they’re so good at handling sequential data and language tasks.

👉 Nerd corner: The original “Attention Is All You Need” paper (2017) is the godfather of transformers. Worth a skim if you like math headaches.

Content Creation & Marketing Goldmine

If you’re a content marketer or SaaS founder, this is where it gets juicy. LLMs = scalable content creation.

The caveat? Google and smart readers can still sniff out robotic text. So the trick is: use LLMs for drafts, then add your human touch.

👉 Side note: Anyone saying “AI will replace copywriters” has never read raw AI output. It’s like eating raw flour—it needs baking.

The Basic Architecture (For Non-Engineers)

Here’s the skeleton of an LLM:

At the core: transformer models with billions of parameters + self-attention mechanism. That’s the magic combo.

Wrapping It Up: Why Large Language Models Are Important

Here’s my honest take: LLMs are both overhyped and underhyped.

Overhyped when people act like they’re sentient. (They’re not. They’re just really good parrots with probability calculators.)

Underhyped when people dismiss them as “just chatbots.” Nope. They’re foundation models transforming AI systems, customer queries, content creation, programming languages, and beyond.

If you’re a founder, marketer, or builder—you don’t need to know every equation behind deep learning architectures. But you damn well need to know what these models can (and can’t) do. Because the future of AI tools, customer feedback, and content creation? It’s being written in tokens, one next word at a time.